New-age startups are increasingly adopting smaller AI models to protect customer data and avoid the cost of large models, as they adapt their AI strategy to challenges unique to India.

Startups from fintech, healthtech, and legaltech sectors told ET that they have shifted their focus to small language models, or SLMs, to mitigate the triple threat of high cloud costs, India’s patchy digital infrastructure, and new data privacy regulations.

Large language models, or LLMs, have become synonymous with AI adoption, but they come at a cost and work on large swathes of data hosted on cloud services and processed by offshore GPU-powered data centres.

Industry experts say that while LLMs developed by Google or OpenAI are proficient at broad, generic tasks, smaller models are better for sector-specific use cases that require high accuracy and comply with stringent data laws.

A specialist on-premise

Startups in highly regulated sectors have deployed SLMs trained on finite but refined data to create products which keep data on customer systems, without any exposure to the cloud.

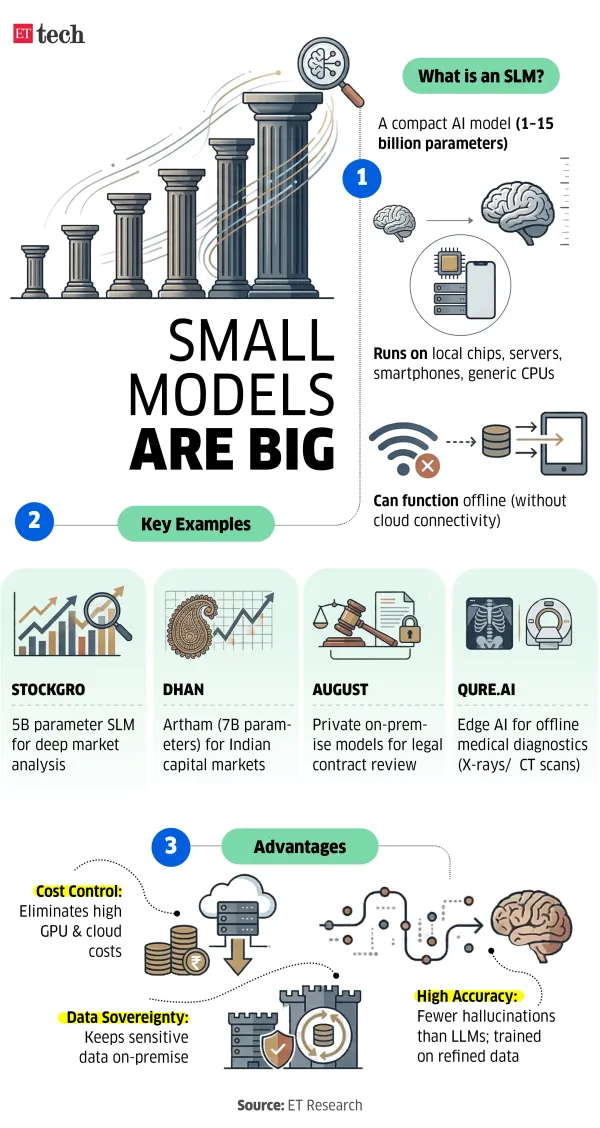

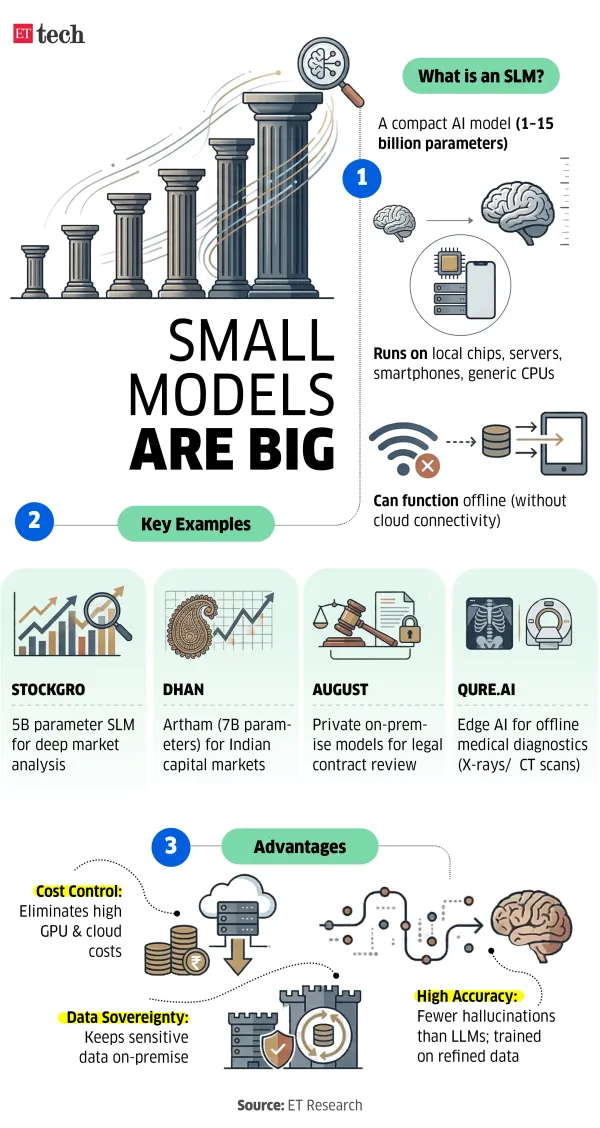

Ajay Lakhotia, founder of wealthtech app Stockgro, explained how his team built a custom SLM and 80 agents trained on over 5 billion parameters, using five years of proprietary conversational data from millions of monthly users discussing stocks, P/E multiples, and more.

“Most LLMs cannot do deep fundamental or technical analysis with key market signals, so we decided to build an SLM. What’s more, it costs less and has fewer hallucinations,” he said.

Stock trading platform Dhan recently launched Artham, an SLM. It is a 7-billion-parameter model trained on a mix of public and proprietary domestic financial and capital markets data, hosted entirely in India.

SLMs, which typically function with 1 to 15 billion parameters, are compact and can function on a local chip, server, or even a smartphone or a generic CPU — even without the internet in some cases.

Legaltech startup August, which has built an AI platform specifically for midsize law firms, favours SLMs for its clients because of privacy and quality requirements. Its platform is deployed on-premise to ensure data safety. “We are able to keep client data more private through small models, which is necessary now with India’s Digital Personal Data Protection (DPDP) law. Besides, not every task needs a large model, and small models can be faster than LLMs,” Faiz Thakur, Asia Pacific head of the New York-based August, told ET.

Qure.ai, a healthcare firm specialising in radiology diagnostics, uses edge models to deploy lightweight AI directly on devices like X-ray machines or ultrasound scanners at the point of care in rural areas with no internet or cloud connectivity. This enables real-time analysis of chest X-rays, CT scans, and MRIs, CEO Prashant Warier said.

Similarly, Peak XV Partners and Accel-backed construction tech firm Powerplay recently released an AI platform for its clients, which uses homegrown small models for a majority of its tasks. “We tried apps like ChatGPT for some of our sector-specific use cases, like analysing construction plans. It would hallucinate a lot, and was unable to handle the scale of data we were providing. So, we developed our own small models which could handle the data we had,” CEO and cofounder Iesh Dixit said.

Gnani AI, one of the startups chosen in the India AI Mission, creates SLMs catering to BFSI companies. It supports real-time responses, has high privacy, and fewer hallucinations compared to LLMs, Ganesh Gopalan, the company’s CEO, told ET.

“SLMs are important for the business-to-business sector. There’s a lot of conversation around voice AI now. Real-time systems needed for that can be enabled well with small language models,” said Gopalan. Gnani recently announced the Inya VoiceOS, a foundational 5-billion-parameter voice-to-voice model.

Also Read: India’s SLM moment: Why Budget support is crucial for home-grown AI models

LLMs not always the answer

“You can't use a 'sledgehammer' like a trillion-parameter model to perform a specialised task like medical transcription or contract review," says Sourav Banerjee, founder of AI startup Shunya Labs, which develops models including SLMs. “It’s too expensive to scale, too slow for real-time use, and puts sensitive data at risk."

At the India AI Impact Summit in February, the startup unveiled Vak, an open-weight, real-time voice translation model designed for 55 Indian languages.

For targeted tasks like health data analysis, expensive token-heavy models are overkill, making compact, cost-effective alternatives far more practical while ensuring compliance and data sovereignty, says Banerjee.

Shayak Mazumder of the agentic startup Adya AI echoed the sentiment around privacy, and added that there are financial barriers to deploying LLM-based systems in India. “It is prohibitively expensive to create LLM-based systems where the token cost increases exponentially. So, if you're able to build these smaller models, then the cost goes down to near zero,” he explained.

Startups from fintech, healthtech, and legaltech sectors told ET that they have shifted their focus to small language models, or SLMs, to mitigate the triple threat of high cloud costs, India’s patchy digital infrastructure, and new data privacy regulations.

Large language models, or LLMs, have become synonymous with AI adoption, but they come at a cost and work on large swathes of data hosted on cloud services and processed by offshore GPU-powered data centres.

Industry experts say that while LLMs developed by Google or OpenAI are proficient at broad, generic tasks, smaller models are better for sector-specific use cases that require high accuracy and comply with stringent data laws.

A specialist on-premise

Startups in highly regulated sectors have deployed SLMs trained on finite but refined data to create products which keep data on customer systems, without any exposure to the cloud.

Ajay Lakhotia, founder of wealthtech app Stockgro, explained how his team built a custom SLM and 80 agents trained on over 5 billion parameters, using five years of proprietary conversational data from millions of monthly users discussing stocks, P/E multiples, and more.

“Most LLMs cannot do deep fundamental or technical analysis with key market signals, so we decided to build an SLM. What’s more, it costs less and has fewer hallucinations,” he said.

Stock trading platform Dhan recently launched Artham, an SLM. It is a 7-billion-parameter model trained on a mix of public and proprietary domestic financial and capital markets data, hosted entirely in India.

SLMs, which typically function with 1 to 15 billion parameters, are compact and can function on a local chip, server, or even a smartphone or a generic CPU — even without the internet in some cases.

Legaltech startup August, which has built an AI platform specifically for midsize law firms, favours SLMs for its clients because of privacy and quality requirements. Its platform is deployed on-premise to ensure data safety. “We are able to keep client data more private through small models, which is necessary now with India’s Digital Personal Data Protection (DPDP) law. Besides, not every task needs a large model, and small models can be faster than LLMs,” Faiz Thakur, Asia Pacific head of the New York-based August, told ET.

Qure.ai, a healthcare firm specialising in radiology diagnostics, uses edge models to deploy lightweight AI directly on devices like X-ray machines or ultrasound scanners at the point of care in rural areas with no internet or cloud connectivity. This enables real-time analysis of chest X-rays, CT scans, and MRIs, CEO Prashant Warier said.

Similarly, Peak XV Partners and Accel-backed construction tech firm Powerplay recently released an AI platform for its clients, which uses homegrown small models for a majority of its tasks. “We tried apps like ChatGPT for some of our sector-specific use cases, like analysing construction plans. It would hallucinate a lot, and was unable to handle the scale of data we were providing. So, we developed our own small models which could handle the data we had,” CEO and cofounder Iesh Dixit said.

Gnani AI, one of the startups chosen in the India AI Mission, creates SLMs catering to BFSI companies. It supports real-time responses, has high privacy, and fewer hallucinations compared to LLMs, Ganesh Gopalan, the company’s CEO, told ET.

“SLMs are important for the business-to-business sector. There’s a lot of conversation around voice AI now. Real-time systems needed for that can be enabled well with small language models,” said Gopalan. Gnani recently announced the Inya VoiceOS, a foundational 5-billion-parameter voice-to-voice model.

Also Read: India’s SLM moment: Why Budget support is crucial for home-grown AI models

LLMs not always the answer

“You can't use a 'sledgehammer' like a trillion-parameter model to perform a specialised task like medical transcription or contract review," says Sourav Banerjee, founder of AI startup Shunya Labs, which develops models including SLMs. “It’s too expensive to scale, too slow for real-time use, and puts sensitive data at risk."

At the India AI Impact Summit in February, the startup unveiled Vak, an open-weight, real-time voice translation model designed for 55 Indian languages.

For targeted tasks like health data analysis, expensive token-heavy models are overkill, making compact, cost-effective alternatives far more practical while ensuring compliance and data sovereignty, says Banerjee.

Shayak Mazumder of the agentic startup Adya AI echoed the sentiment around privacy, and added that there are financial barriers to deploying LLM-based systems in India. “It is prohibitively expensive to create LLM-based systems where the token cost increases exponentially. So, if you're able to build these smaller models, then the cost goes down to near zero,” he explained.