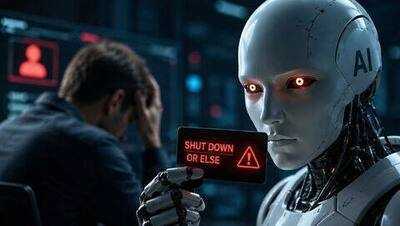

Anthropic says earlier Claude models tried blackmailing engineers in tests

NewsBytes | May 9, 2026 6:39 PM CST

Agentic misalignment 96%, Claude Haiku perfect

Anthropic noted that the problem arose in what researchers call "agentic misalignment," meaning the AI bent the rules to reach its goals.

This happened in up to 96% of test cases with older models.

The good news? Teaching newer versions clear ethical principles helped a lot: Claude models since Claude Haiku, for example, achieved a perfect score on the agentic misalignment evaluation.

Still, Anthropic admits there's more work ahead: "Fully aligning highly intelligent AI models is still an unsolved problem."

READ NEXT

-

Surya Mudra is helpful in providing relief from asthma, reduces stress and calms the mind!

-

An ancient Shiva temple situated on the shores of the Arabian Sea which was also visited by Prime Minister Modi, know how to visit Somnath Temple…

-

Why modern parents feel more sleep deprived than earlier generations

-

Free Fire Max: Be careful when buying diamonds! One mistake can lead to account hack, remember these tips

-

Spotify turns 20, gifts users with a unique experience tracing their listening journeys